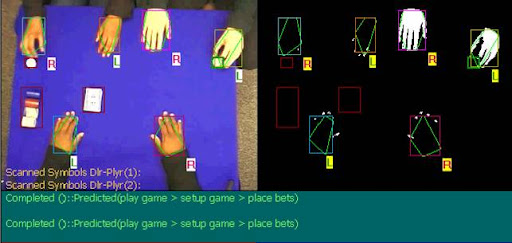

Paper AAAI (2002): "Recognizing Multitasked Activities from Video using Stochastic Context-Free Grammar"

D. Moore and I. Essa (2002). “Recognizing multitasked activities from video using stochastic context-free grammar”, in Proceedings of AAAI 2002. [PDF | Project Site]

Abstract

In this paper, we present techniques for recognizing com- plex, multitasked activities from video. Visual information like image features and motion appearances, combined with domain-specific information, like object context is used ini- tially to label events. Each action event is represented with a unique symbol, allowing for a sequence of interactions to be described as an ordered symbolic string. Then, a model of stochastic context-free grammar (SCFG), which is devel- oped using underlying rules of an activity, is used to provide the structure for recognizing semantically meaningful behav- ior over extended periods. Symbolic strings are parsed us- ing the Earley-Stolcke algorithm to determine the most likely semantic derivation for recognition. Parsing substrings al- lows us to recognize patterns that describe high-level, com- plex events taking place over segments of the video sequence. We introduce new parsing strategies to enable error detection and recovery in stochastic context-free grammar and meth- ods of quantifying group and individual behavior in activities with separable roles. We show through experiments, with a popular card game, the recognition of high-level narratives of multi-player games and the identification of player strate- gies and behavior using computer vision.