William Mong Distinguished Lecture at the University of Hong Kong on "Video Cameras are Everywhere: Data-Driven Methods for Video Analysis and Enhancement"

Abstract

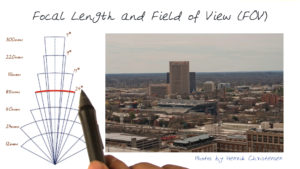

In this talk, I will start by describing the pervasiveness of image and video content and how such content is growing with the ubiquity of cameras. I will use this to motivate the need for better tools for the analysis and enhancement of video content. I will start with some of our earlier work on temporal video modeling, then lead up to some of our current work and describe two main projects. (1) Our approach for a video stabilizer, currently implemented and running on YouTube, and its extensions. (2) A robust and scaleable method for video segmentation.

I will describe, in some detail, our Video stabilization method, which generates stabilized videos and is in wide use. Our method allows video stabilization beyond the conventional filtering that only suppresses high-frequency jitter. This method also supports the removal of rolling shutter distortions common in modern CMOS cameras that capture the frame one scan line at a time resulting in non-rigid image distortions such as shear and wobble. Our method does not rely on apriori knowledge and works on video from any camera or on legacy footage. I will showcase examples of this approach and discuss how this method is launched and running on YouTube, with Millions of users.

Then I will describe an efficient and scalable technique for spatio-temporal segmentation of long video sequences using a hierarchical graph-based algorithm. This hierarchical approach generates high-quality segmentations, and we demonstrate the use of this segmentation as users interact with the video, enabling efficient annotation of objects within the video. I will also show recent work on how this segmentation and annotation can be used for dynamic scene understanding.