Paper in ISWC 2015: "Predicting Daily Activities from Egocentric Images Using Deep Learning"

Paper

Predicting Daily Activities from Egocentric Images Using Deep Learning Proceedings Article

In: Proceedings of International Symposium on Wearable Computers (ISWC), 2015.

Abstract

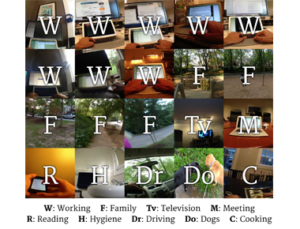

We present a method to analyze images taken from a passive egocentric wearable camera along with contextual information, such as time and day of the week, to learn and predict the everyday activities of an individual. We collected a dataset of 40,103 egocentric images over 6 months with 19 activity classes and demonstrate the benefit of state-of-the-art deep learning techniques for learning and predicting daily activities. Classification is conducted using a Convolutional Neural Network (CNN) with a classification method we introduce called a late fusion ensemble. This late fusion ensemble incorporates relevant contextual information and increases our classification accuracy. Our technique achieves an overall accuracy of 83.07% in predicting a person’s activity across the 19 activity classes. We also demonstrate some promising results from two additional users by fine-tuning the classifier with one day of training data.

- Presented at The 19th International Symposium on Wearable Computers held at Grand Front Osaka in Umeda, Osaka, Japan from Sep. 7-11, 2015.

- More details at Project Website.

- Media coverage: Researchers Develop Deep-Learning Method to Predict Daily Activities by GVU Center