Paper in IJCARS (2016) on “Automated video-based assessment of surgical skills for training and evaluation in medical schools”

Routine evaluation of basic surgical skills in medical schools requires considerable time and effort from supervising faculty. For each surgical trainee, a supervisor has to observe the trainees in-person. Alternatively, supervisors may use training videos, which reduces some of the logistical overhead. All these approaches, however, are still incredibly time-consuming and involve human bias. In this paper, we present an automated system for surgical skills assessment by analyzing video data of surgical activities.

Research Blog: Motion Stills – Create beautiful GIFs from Live Photos

Kudos to the team from Machine Perception at Google Research that just launched the Motion Still App to generate novel photos on an iOS device. This work is in part aimed at combining efforts like Video Textures and Video Stabilization and a lot more. Source: Research Blog: Motion Stills – Create beautiful GIFs from Live […]

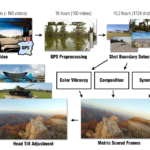

Paper in WACV 2016 on “Discovering Picturesque Highlights from Egocentric Vacation Videos”

Paper Abstract We present an approach for identifying picturesque highlights from large amounts of egocentric video data. Given a set of egocentric videos captured over the course of a vacation, our method analyzes the videos and looks for images that have good picturesque and artistic properties. We introduce novel techniques to automatically determine aesthetic features […]

Paper in MICCAI (2015): "Automated Assessment of Surgical Skills Using Frequency Analysis"

Paper [bibtex file=IrfanEssaWS.bib key=2015-Zia-AASSUFA] Abstract We present an automated framework for a visual assessment of the expertise level of surgeons using the OSATS (Objective Structured Assessment of Technical Skills) criteria. Video analysis technique for extracting motion quality via frequency coefficients is introduced. The framework is tested in a case study that involved analysis of videos of medical students with different expertise […]

2015 C+J Symposium

Source: 2015 C+J Symposium Participated the 4th Computation+Journalism Symposium, October 2-3, in New York, NY at The Brown Institute for Media Innovation Pulitzer Hall, Columbia University. Keynotes were Lada Adamic (Facebook) and Chris Wiggins (Columbia, NYT), with 2 curated panels and 5 sessions of peer-reviewed papers.Past Symposiums were held in

Presentation at Max-Planck-Institut für Informatik in Saarbrücken (2015): "Video Analysis and Enhancement"

Video Analysis and Enhancement: Spatio-Temporal Methods for Extracting Content from Videos and Enhancing Video Output Irfan Essa (prof.irfanessa.com) Georgia Institute of Technology School of Interactive Computing Hosted by Max-Planck-Institut für Informatik in Saarbrucken (Bernt Schiele, Director of Computer Vision and Multimodal Computing) Abstract In this talk, I will start with describing the pervasiveness of image and video content, […]

Dagstuhl Workshop 2015: "Modeling and Simulation of Sport Games, Sport Movements, and Adaptations to Training"

Participated in the Dagstuhl Workshop on “Modeling and Simulation of Sports Games, Sport Movements, and Adaptations to Training” at the Dagstuhl Castle, September 13 – 16, 2015. Motivation Source: Schloss Dagstuhl : Seminar HomepagePast Seminars on this topic include